Symbolic Regression Benchmark Functions

At last year's GECCO in Dublin a discussion revolved around the fact that the genetic programming community needs a set of suitable benchmark problems. Many experiments presented in the GP literature are based on very simple toy problems and thus the results are often unconvincing. The whole topic is summarized on http://gpbenchmarks.org.

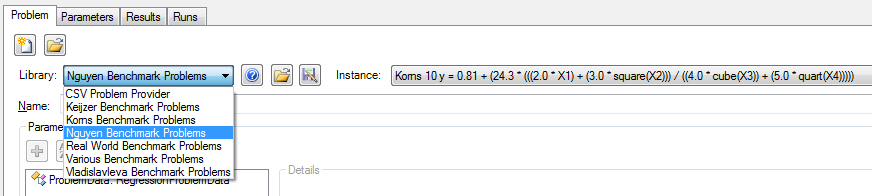

This page also lists benchmark problems for symbolic regression from a number of different papers. Thanks to our new developer Stefan Forstenlechner most of these problems are now available in HeuristicLab and will be included in the next release. The benchmark problems can be easily loaded directly in the GUI through problem instance providers. Additionally, it is very simple to create an experiment to execute an algorithm on all instances using the new 'Create Experiment' dialog implemented by Andreas (see the previous blog post)

I used these new features to quickly apply a random forest regression algorithm (R=0.7, Number of trees=50) on all regression benchmark problems and got the following results. Let's see how symbolic regression with GP will perform...

| Problem instance | Avg. R² (test) |

| Keijzer 4 f(x) = 0.3 * x *sin(2 * PI * x) | 0.984 |

| Keijzer 5 f(x) = x ^ 3 * exp(-x) * cos(x) * sin(x) * (sin(x) ^ 2 * cos(x) - 1) | 1.000 |

| Keijzer 6 f(x) = (30 * x * z) / ((x - 10) * y^2) | 0.956 |

| Keijzer 7 f(x) = Sum(1 / i) From 1 to X | 0.911 |

| Keijzer 8 f(x) = log(x) | 1.000 |

| Keijzer 9 f(x) = sqrt(x) | 1.000 |

| Keijzer 11 f(x, y) = x ^ y | 0.957 |

| Keijzer 12 f(x, y) = xy + sin((x - 1)(y - 1)) | 0.267 |

| Keijzer 13 f(x, y) = x^4 - x^3 + y^2 / 2 - y | 0.610 |

| Keijzer 14 f(x, y) = 6 * sin(x) * cos(y) | 0.321 |

| Keijzer 15 f(x, y) = 8 / (2 + x^2 + y^2) | 0.484 |

| Keijzer 16 f(x, y) = x^3 / 5 + y^3 / 2 - y - x | 0.599 |

| Korns 1 y = 1.57 + (24.3 * X3) | 0.998 |

| Korns 2 y = 0.23 + (14.2 * ((X3 + X1) / (3.0 * X4))) | 0.009 |

| Korns 3 y = -5.41 + (4.9 * (((X3 - X0) + (X1 / X4)) / (3 * X4))) | 0.023 |

| Korns 4 y = -2.3 + (0.13 * sin(X2)) | 0.384 |

| Korns 5 y = 3.0 + (2.13 * log(X4)) | 0.977 |

| Korns 6 y = 1.3 + (0.13 * sqrt(X0)) | 0.997 |

| Korns 7 y = 213.80940889 - (213.80940889 * exp(-0.54723748542 * X0)) | 0.000 |

| Korns 8 y = 6.87 + (11 * sqrt(7.23 * X0 * X3 * X4)) | 0.993 |

| Korns 9 y = ((sqrt(X0) / log(X1)) * (exp(X2) / square(X3))) | 0.000 |

| Korns 10 y = 0.81 + (24.3 * (((2.0 * X1) + (3.0 * square(X2))) / ((4.0 * cube(X3)) + (5.0 * quart(X4))))) | 0.003 |

| Korns 11 y = 6.87 + (11 * cos(7.23 * X0 * X0 * X0)) | 0.000 |

| Korns 12 y = 2.0 - (2.1 * (cos(9.8 * X0) * sin(1.3 * X4))) | 0.001 |

| Korns 13 y = 32.0 - (3.0 * ((tan(X0) / tan(X1)) * (tan(X2) / tan(X3)))) | 0.000 |

| Korns 14 y = 22.0 + (4.2 * ((cos(X0) - tan(X1)) * (tanh(X2) / sin(X3)))) | 0.000 |

| Korns 15 y = 12.0 - (6.0 * ((tan(X0) / exp(X1)) * (log(X2) - tan(X3)))) | 0.000 |

| Nguyen F1 = x^3 + x^2 + x | 0.944 |

| Nguyen F2 = x^4 + x^3 + x^2 + x | 0.992 |

| Nguyen F3 = x^5 + x^4 + x^3 + x^2 + x | 0.983 |

| Nguyen F4 = x^6 + x^5 + x^4 + x^3 + x^2 + x | 0.960 |

| Nguyen F5 = sin(x^2)cos(x) - 1 | 0.975 |

| Nguyen F6 = sin(x) + sin(x + x^2) | 0.997 |

| Nguyen F7 = log(x + 1) + log(x^2 + 1) | 0.977 |

| Nguyen F8 = Sqrt(x) | 0.966 |

| Nguyen F9 = sin(x) + sin(y^2) | 0.988 |

| Nguyen F10 = 2sin(x)cos(y) | 0.986 |

| Nguyen F11 = x^y | 0.961 |

| Nguyen F12 = x^4 - x^3 + y^2/2 - y | 0.979 |

| Spatial co-evolution F(x,y) = 1/(1+power(x,-4)) + 1/(1+pow(y,-4)) | 0.983 |

| TowerData | 0.972 |

| Vladislavleva Kotanchek | 0.854 |

| Vladislavleva RatPol2D | 0.785 |

| Vladislavleva RatPol3D | 0.795 |

| Vladislavleva Ripple | 0.951 |

| Vladislavleva Salutowicz | 0.996 |

| Vladislavleva Salutowicz2D | 0.960 |

| Vladislavleva UBall5D | 0.892 |

Parameter Variation Experiments in Upcoming HeuristicLab Release

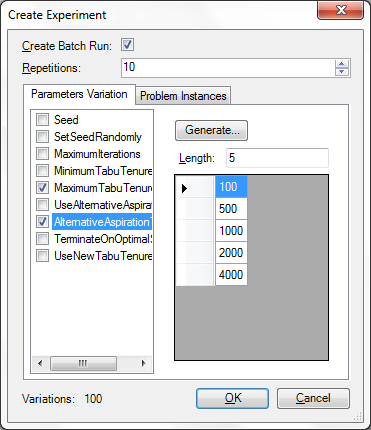

We've included a new feature in the upcoming release of HeuristicLab 3.3.7 that will make it more comfortable to create parameter variation experiments.

Metaheuristics and data analysis methods often have a number of parameters which highly influence their behavior and thus the quality that you obtain in applying them on a certain problem. The best parameters are usually not known a priori and you can either use metaoptimization (available from the download page under "Additional packages") or create a set of experiments where each parameter is varied accordingly. In the upcoming release we've made this variation task a lot easier.

We have enhanced the "Create Experiments" dialog that is available through the Edit menu. To try out the new feature you can obtain the latest daily build from the Download page and load one of the samples. The dialog allows you to specify the values of several parameters and allows you to create an experiment where all configurations are enumerated.

We have also included the new problem instance infrastructure in this dialog which further allows you to test certain configurations on a number of benchmark instances from several benchmark libraries.

Finally, here are a couple of points that you should be aware of to make effective use of this feature. You can view this as a kind of checklist, before creating and executing experiments:

- Before creating an experiment make sure you prepare the algorithm accordingly, set all parameter that you do not want to vary to the value that you intend. If the algorithm contains any runs, clear them first.

- Review the selected analyzers carefully, maybe you want to exclude the quality chart and some other analyzers that would produce too much data for a large experiment. Or maybe you want to output the analyzers only every xth iteration.

- Make sure you check to include in the run only those problem and algorithm parameters that you need. Think twice before showing a parameter in the run that requires a lot of memory.

- Make sure SetSeedRandomly (if available) is set to true if you intend to repeat each configuration.

- When you make experiments with dependent parameters you have to resolve the dependencies and create separate experiments. For example, when you have one parameter that specifies a lower bound and another that specifies an upper bound you should create separate experiments for each lower bound so that you don't obtain configurations where the upper bound is lower than the lower bound.

- Finally, while you vary the parameters keep an eye on the number of variations. HeuristicLab doesn't prevent you from creating very large experiments, but if there are many variations you might want to create separate experiments.

rss

rss