HeuristicLab 3.3.16 "Prague" Release

We are happy to announce the release of HeuristicLab 3.3.16, which is named after the location of this year's Genetic and Evolutionary Computation Conference (GECCO) in Prague, Czech Republic.

HeuristicLab 3.3.16 "Prague" contains the following new features:

- decision tree algorithms for regression problems

- integration of Google OR-Tools for writing and solving MIP/LP models

- new and much faster persistence API (HEAL.Attic) for saving and loading files

- management of projects in HeuristicLab Hive for assigning jobs and compute resources

- upgraded to .NET Framework 4.6.1

- several other performance improvements and bug fixes

For a full list of all changes have a look at the ChangeLog.

Go to the Download page or click on the image below to get HeuristicLab 3.3.16 "Prague" now!

HeuristicLab 3.3.15 "Berlin" Release

We are happy to announce the release of HeuristicLab 3.3.15, which is named after the location of last year's Genetic and Evolutionary Computation Conference (GECCO) in Berlin, Germany.

HeuristicLab 3.3.15 "Berlin" contains the following new features:

- New problems:

- Graph Coloring

- New data analysis algorithms:

- Barnes-Hut t-SNE (C# port of Laurens van der Maaten's C++ implementation)

- Kernel Ridge Regression

- Elastic-net Regression (glmnet wrapper)

- Support for categorical variables for symbolic regression and multiple data analysis algorithms

- PSO improvements (compatible with standard PSO 2011)

- Extreme-point based packing algorithm for 3D bin packing

- New functionalities for data preprocessing

- New functionality for run analysis (e.g. RLD/ECDF)

For a full list of all changes have a look at the ChangeLog.

Go to the Download page or click on the image below to get HeuristicLab 3.3.15 "Berlin" now!

Windows 10 Creators Update: Problems Resolved

Apparently Microsoft has provided a fix for the blue screen issues that we described in May, see Problems with the Windows 10 Creators Update.

The update KB4022716 released on June, 27th includes the fix. After applying this update through windows update you can restore the nesting level to a more comfortable setting, e.g. 35. Changing the nesting level is described in this post for the current stable binaries and for the forthcoming 3.3.15 release version.

Users of the 3.3.14 release version cannot change the nesting level as the limit was hardcoded. However its setting can also be considered safe again after update KB4022716 is installed.

Implementing LAHC in HeuristicLab

Late Acceptance Hill-Climbing (LAHC) is a relatively recent single-solution metaheuristic, see Burke and Bykov's article:

It has a single parameter that controls the chance to make a deteroriating move. The higher this parameter the greater the chance for exploration and thus of reaching a higher quality solution, however the more time is required in convergence. LAHC is not available in HeuristicLab as a standard algorithm yet. But HeuristicLab makes it very easy to implement new algorithms through scripting and in doing so we can reuse the problem instances that we include as well as the infrastructure for visualizing solutions and analyzing runs. The following is a C# script-based implementation of LAHC that optimizes solutions to the berlin52 TSP problem.

If you copy and paste the following code to a new C# script you can run the algorithm. In this code you also see how you can create run objects from scripts. The run contains the solution, algorithm and problem description and convergence graphs among other data. If you put this and other run objects in a RunCollection you can easily do a performance analysis. Hint: You must obtain the latest stable binaries from our download page to run this code.

using System;

using System.Collections.Generic;

using System.Diagnostics;

using System.Linq;

using HeuristicLab.Analysis;

using HeuristicLab.Common;

using HeuristicLab.Core;

using HeuristicLab.Data;

using HeuristicLab.Encodings.PermutationEncoding;

using HeuristicLab.Problems.Instances;

using HeuristicLab.Problems.Instances.TSPLIB;

using HeuristicLab.Problems.TravelingSalesman;

using HeuristicLab.Optimization;

public class LAHCScript : HeuristicLab.Scripting.CSharpScriptBase {

public override void Main() {

var random = new HeuristicLab.Random.MersenneTwister();

var tsplib = new TSPLIBTSPInstanceProvider();

var instance = tsplib.GetDataDescriptors()

.Single(x => x.Name == "berlin52");

var tsp = tsplib.LoadData(instance);

var dm = new DistanceMatrix(tsp.GetDistanceMatrix());

Permutation best = null, current = null, candidate = null;

var candidateFitness = double.MaxValue;

var bestFitness = double.MaxValue;

var currentFitness = double.MaxValue;

IsBetter isBetter = (first, second, orEqual) => (first < second)

|| (orEqual && first == second);

InitializeFitness init = () => {

candidate = new Permutation(

PermutationTypes.RelativeUndirected, tsp.Dimension, random);

current = candidate;

best = candidate;

candidateFitness = TourLength(candidate, dm);

currentFitness = candidateFitness;

bestFitness = candidateFitness;

return candidateFitness;

};

NextFitness next = () => {

candidate = new Permutation(

PermutationTypes.RelativeUndirected, current);

var move = StochasticInversionSingleMoveGenerator

.Apply(candidate, random);

candidateFitness = currentFitness + TSPInversionMovePathEvaluator

.EvaluateByDistanceMatrix(candidate, move, dm);

// important to perform the move after the delta calculation

InversionManipulator.Apply(candidate, move.Index1, move.Index2);

return candidateFitness;

};

Accept accept = () => {

current = candidate;

currentFitness = candidateFitness;

if (isBetter(candidateFitness, bestFitness, orEqual: false)) {

best = candidate;

bestFitness = candidateFitness;

}

};

var run = LAHC(init, next, accept, isBetter, 5000);

run.Parameters.Add("Problem Name",

new StringValue(instance.Name));

run.Parameters.Add("Problem Type",

new StringValue(typeof(TravelingSalesmanProblem).Name));

run.Parameters.Add("Maximization", new BoolValue(false));

if (tsp.BestKnownQuality.HasValue)

run.Parameters.Add("BestKnownQuality",

new DoubleValue(tsp.BestKnownQuality.Value));

var solution = new PathTSPTour(new DoubleMatrix(tsp.Coordinates),

best, new DoubleValue(bestFitness));

run.Results.Add("Best Solution", solution);

HeuristicLab.MainForm.MainFormManager.MainForm.ShowContent(run);

}

private static double TourLength(Permutation perm, DistanceMatrix dm) {

var length = dm[perm.Last(), perm.First()];

for (var i = 1; i < perm.Length; i++) {

length += dm[perm[i - 1], perm[i]];

}

return length;

}

public delegate double InitializeFitness();

public delegate double NextFitness();

public delegate void Accept();

public delegate bool IsBetter(double first, double second, bool orEqual);

public static Run LAHC(InitializeFitness init, NextFitness next,

Accept accept, IsBetter isBetter, int listSize,

int minTries = 100000) {

var sw = Stopwatch.StartNew();

var cgraph_fe = new IndexedDataTable<double>("Convergence Graph FE");

var cgraph_wc = new IndexedDataTable<double>("Convergence Graph Time");

var crow_fe = new IndexedDataRow<double>("First-hit");

var crow_wc = new IndexedDataRow<double>("First-hit");

cgraph_fe.Rows.Add(crow_fe);

cgraph_fe.VisualProperties.XAxisLogScale = true;

cgraph_wc.Rows.Add(crow_wc);

cgraph_wc.VisualProperties.XAxisLogScale = true;

var bestFit = init();

var globalIter = 0;

var memory = new double[listSize];

for (var v = 0; v < listSize; v++) {

memory[v] = bestFit;

}

Tuple<int, double> last = null;

foreach (var s in

LAHC(next, accept, isBetter, bestFit, memory, minTries)) {

if (isBetter(s.Item2, bestFit, orEqual: false)) {

bestFit = s.Item2;

crow_fe.Values.Add(

Tuple.Create((double)globalIter + s.Item1, s.Item2));

crow_wc.Values.Add(

Tuple.Create(sw.ElapsedTicks / (double)Stopwatch.Frequency,

s.Item2));

}

last = s;

}

globalIter += last.Item1;

sw.Stop();

crow_fe.Values.Add(Tuple.Create((double)globalIter, bestFit));

crow_wc.Values.Add(

Tuple.Create(sw.ElapsedTicks / (double)Stopwatch.Frequency,

bestFit));

var run = new Run() { Name = "LAHC-" + listSize };

run.Parameters.Add("Algorithm Name", new StringValue("LAHC"));

run.Parameters.Add("ListSize", new IntValue(listSize));

run.Parameters.Add("MinTries", new IntValue(minTries));

run.Parameters.Add("Algorithm Type", new StringValue("LAHC"));

run.Results.Add("ExecutionTime", new TimeSpanValue(

TimeSpan.FromSeconds(sw.ElapsedTicks

/ (double)Stopwatch.Frequency)));

run.Results.Add("EvaluatedSolutions", new IntValue(globalIter));

run.Results.Add("BestQuality", new DoubleValue(bestFit));

run.Results.Add("QualityPerEvaluations", cgraph_fe);

run.Results.Add("QualityPerClock", cgraph_wc);

return run;

}

private static IEnumerable<Tuple<int, double>> LAHC(

NextFitness nextFitness, Accept doAccept,

IsBetter isBetter, double fit, double[] memory,

int minTries) {

var bestFit = fit;

var tries = 0;

var lastSuccess = 0;

var l = memory.Length;

while (tries < minTries || (tries - lastSuccess) < tries * 0.02) {

var nextFit = nextFitness();

var v = tries % l;

tries++;

var prevFit = memory[v];

var accept = isBetter(nextFit, fit, orEqual: true)

|| isBetter(nextFit, prevFit, orEqual: true);

if (accept && isBetter(nextFit, fit, orEqual: false))

lastSuccess = tries;

if (accept) {

if (isBetter(nextFit, bestFit, orEqual: false)) {

bestFit = nextFit;

yield return Tuple.Create(tries, bestFit);

}

fit = nextFit;

doAccept();

}

if (isBetter(fit, prevFit, orEqual: false))

memory[v] = fit;

}

yield return Tuple.Create(tries, bestFit);

}

}

External Evaluation with Programmable Problems

The ExternalEvaluationProblem in HeuristicLab can be used when the fitness calculation has to be done by a separate process. Setting up such a problem is not difficult and covered in a tutorial. However, the external evaluation problem due to its simplicity is limited in what you can do. You don't have so much control on how you fill the SolutionMessage and you also can do less with the QualityMessage that you obtain in return, e.g. regarding custom extensions.

If you need more freedom use can also use the programmable problem in HeuristicLab to do external evaluations. In the following code snippet the programmable problem's evaluate attempts to use an external client to do the evaluation. It expects that there is a process running at localhost and listening on port 2112 for solution messages.

using System;

using System.Collections.Generic;

using System.Linq;

using System.Threading;

using HeuristicLab.Common;

using HeuristicLab.Collections;

using HeuristicLab.Core;

using HeuristicLab.Data;

using HeuristicLab.Encodings.BinaryVectorEncoding;

using HeuristicLab.Optimization;

using HeuristicLab.Problems.Programmable;

using Google.ProtocolBuffers;

using HeuristicLab.Problems.ExternalEvaluation;

namespace HeuristicLab.Problems.Custom {

public class ExternalKnapsack : CompiledProblemDefinition, ISingleObjectiveProblemDefinition {

public bool Maximization { get { return true; } }

public override void Initialize() {

Encoding = new BinaryVectorEncoding("kp", length: ((IntArray)vars.weights).Length);

vars.cache = new EvaluationCache();

var clients = new CheckedItemCollection<IEvaluationServiceClient>();

var client = new EvaluationServiceClient();

client.ChannelParameter.Value = new EvaluationTCPChannel("127.0.0.1", 2112);

clients.Add(client);

vars.clients = clients;

vars.weights = new IntArray(new [] { 2, 3, 7, 5, 9, 7, 6, 5, 7, 3, 2, 1, 5 });

vars.values = new IntArray(new [] { 2, 9, 5, 1, 9, 4, 5, 7, 8, 5, 9, 3, 7 });

vars.maxWeight = new IntValue(43);

vars.messageBuilder = new SolutionMessageBuilder();

}

public double Evaluate(Individual individual, IRandom random) {

var solutionMessage = BuildSolutionMessage(individual);

var cache = (EvaluationCache)vars.cache;

return (cache == null

? EvaluateOnNextAvailableClient(solutionMessage)

: cache.GetValue(solutionMessage, EvaluateOnNextAvailableClient,

GetQualityMessageExtensions()))

.GetExtension(SingleObjectiveQualityMessage.QualityMessage_).Quality;

}

private HashSet<IEvaluationServiceClient> activeClients =

new HashSet<IEvaluationServiceClient>();

private object clientLock = new object();

public virtual ExtensionRegistry GetQualityMessageExtensions() {

var extensions = ExtensionRegistry.CreateInstance();

extensions.Add(SingleObjectiveQualityMessage.QualityMessage_);

return extensions;

}

private QualityMessage EvaluateOnNextAvailableClient(SolutionMessage message) {

var clients = (CheckedItemCollection<IEvaluationServiceClient>)vars.clients;

IEvaluationServiceClient client = null;

lock (clientLock) {

client = clients.CheckedItems.FirstOrDefault(c => !activeClients.Contains(c));

while (client == null && clients.CheckedItems.Any()) {

Monitor.Wait(clientLock);

client = clients.CheckedItems.FirstOrDefault(c => !activeClients.Contains(c));

}

if (client != null)

activeClients.Add(client);

}

try {

return client.Evaluate(message, GetQualityMessageExtensions());

} finally {

lock (clientLock) {

activeClients.Remove(client);

Monitor.PulseAll(clientLock);

}

}

}

private SolutionMessage BuildSolutionMessage(Individual individual,

int solutionId = 0) {

var messageBuilder = (SolutionMessageBuilder)vars.messageBuilder;

lock (clientLock) {

SolutionMessage.Builder protobufBuilder = SolutionMessage.CreateBuilder();

protobufBuilder.SolutionId = solutionId;

foreach (var variable in individual.Values) {

try {

messageBuilder.AddToMessage(variable.Value, variable.Key, protobufBuilder);

}

catch (ArgumentException ex) {

throw new InvalidOperationException(

string.Format(@"ERROR while building solution message:

Parameter {0} cannot be added to the message", variable.Key), ex);

}

}

return protobufBuilder.Build();

}

}

public void Analyze(Individual[] individuals, double[] qualities,

ResultCollection results, IRandom random) {

// ...

}

public IEnumerable<Individual> GetNeighbors(Individual individual, IRandom random) {

// ...

yield break;

}

}

}

Please bear in mind, that you have to use the ParallelEngine in your algorithms to make use of multiple clients at the same time. Any code that you add to Evaluate thus needs to be thread-safe.

Update: Problems with the Windows 10 Creators Update

The issue related to the bluescreen on Windows 10 Creators Update is caused by a high nesting of controls (stackoverflow, microsoft support). Previously, exceeding the maximum nesting level was rarely encountered and a warning was issued in the worst case. Since the Windows 10 Creators Update, the maximum nesting level is much lower and exceeding the maximum immediately results in a bluescreen.

To avoid exceeding the maximum nesting level, HeuristicLab can intercept further nesting, by opening a new Tab instead, as soon as a defined nesting threshold is reached. This threshold can be changed by selecting View -> Change Nesting Level... in the menu bar of HeuristicLab (implemented in #2788). For users that have the Windows 10 Creators Update installed, we recommend a maximum nesting of 25. Additionally, HeuristicLab now detects whether the Creators Update is installed and issues a warning if the nesting threshold is too high.

To enable the user-configurable nesting threshold, please download the latest HeuristicLab version (HeuristicLab stable branch binaries).

Problems with the Windows 10 Creators Update

There seem to be problems with the so called "Creators Update" for Windows 10. Several users are experiencing blue screens after they have upgraded their Windows installation. The blue screen may occur simply by clicking around in the parameters' text boxes. We are not yet sure what is the sudden cause for these problems.

HeuristicLab still works fine with the Anniversary Update (build version 1607). At this point, if you want to use HeuristicLab we think it is best to postpone installing the Creators Update until this problem has been resolved.

You can check which version of Windows you have if you press Windows-R and type "winver" without quotes in the box. If it reads "1703" you have the Creators Update installed.

Modeling scheduling problems with HeuristicLab

HeuristicLab makes it very easy to model scheduling problems. HeuristicLab comes included with the simulation framework Sim#, a port of SimPy to C#. With Sim# writing process-based simulation models requires little effort. Here, I demonstrate in only a few lines of code how to implement a permutation flow shop problem using a Programmable Problem (single-objective). You can create this problem in the GUI from the New Item dialog and paste following code into the editor pane, then hit compile. You can optimize solutions after you dragged it to the Problem tab of an algorithm that can handle programmable permutation-based problems (e.g. Genetic Algorithm).

using System;

using System.Linq;

using System.Collections.Generic;

using HeuristicLab.Core;

using HeuristicLab.Data;

using HeuristicLab.Encodings.PermutationEncoding;

using HeuristicLab.Optimization;

using IRandom = HeuristicLab.Core.IRandom;

using SimSharp;

using SysEnv = System.Environment;

using SimEnv = SimSharp.Environment;

namespace HeuristicLab.Problems.Programmable {

public class FlowShopProblemDefinition : CompiledProblemDefinition,

ISingleObjectiveProblemDefinition {

private static readonly string[] machineSep = new [] { SysEnv.NewLine };

private static readonly string[] jobSep = new [] { " ", "\t" };

// from http://mistic.heig-vd.ch/taillard/problemes.dir/ordonnancement.dir/flowshop.dir/tai20_5.txt

private static readonly string instance = @"

54 83 15 71 77 36 53 38 27 87 76 91 14 29 12 77 32 87 68 94

79 3 11 99 56 70 99 60 5 56 3 61 73 75 47 14 21 86 5 77

16 89 49 15 89 45 60 23 57 64 7 1 63 41 63 47 26 75 77 40

66 58 31 68 78 91 13 59 49 85 85 9 39 41 56 40 54 77 51 31

58 56 20 85 53 35 53 41 69 13 86 72 8 49 47 87 58 18 68 28

";

private int M, J; // number of machines, jobs

private List<List<int>> pij; // processing times per machine, job

// objective is to minimize makespan

public bool Maximization { get { return false; } }

public override void Initialize() {

// processing times are parsed from the instance

pij = instance.Split(machineSep, StringSplitOptions.RemoveEmptyEntries)

.Select(x => x.Split(jobSep, StringSplitOptions.RemoveEmptyEntries)

.Select(y => int.Parse(y)).ToList()).ToList();

M = pij.Count;

J = pij[0].Count;

// encoding is created

Encoding = new PermutationEncoding("p", length: J,

type: PermutationTypes.Absolute);

}

public double Evaluate(Individual ind, IRandom random) {

// create a new Sim# environment

var env = new SimEnv();

// create the M machines

var machines = Enumerable.Range(0, M).Select(x => new Resource(env)).ToArray();

// add the jobs in order given by the permutation

foreach (var o in ind.Permutation("p"))

env.Process(Job(env, o, machines));

// run the simulation

env.Run();

// return the current simulation time (last finish time)

return env.NowD;

}

private IEnumerable<Event> Job(SimEnv env, int j, Resource[] machines) {

// for each of the M machines in order from 0 to M-1

for (var m = 0; m < M; m++) {

// create a new request for the next machine

// the machine is freed after the using block

using (var req = machines[m].Request()) {

// wait upon obtaining the machine

yield return req;

// process the job j on machine m

yield return env.TimeoutD(pij[m][j]);

}

}

}

public void Analyze(Individual[] individuals, double[] qualities,

ResultCollection results, IRandom random) { }

// for move-based algorithms, all possible swap2 neighbors are returned

public IEnumerable<Individual> GetNeighbors(Individual ind, IRandom random) {

var p = ind.Permutation("p");

foreach (var move in ExhaustiveSwap2MoveGenerator.Apply(p)) {

var neighbor = ind.Copy();

var perm = neighbor.Permutation("p");

Swap2Manipulator.Apply(perm, move.Index1, move.Index2);

yield return neighbor;

}

}

}

}

This includes a "simple" 5 machine / 20 job problem instance from Taillard's instances. Of course simulation enables to build much more complex models.

Note: Starting from Sim# 3.1.0 the class SimSharp.Environment is deprecated and should be replaced with SimSharp.Simulation.

HeuristicLab 3.3.14 "Denver" Release

We are happy to announce the release of HeuristicLab 3.3.14, which we finished at this year's Genetic and Evolutionary Computation Conference (GECCO) in Denver, CO, USA.

HeuristicLab 3.3.14 "Denver" contains the following new features:

- New problems:

- Bin Packing

- Probabilistic TSP

- Multi-Objective Testfunctions

- New data analysis algorithms:

- Gradient Boosted Regression

- Nonlinear Regression

- Elastic-Net

- Gradient Charts

For a full list of all changes have a look at the ChangeLog.

Go to the Download page or click on the image below to get HeuristicLab 3.3.14 "Denver" now!

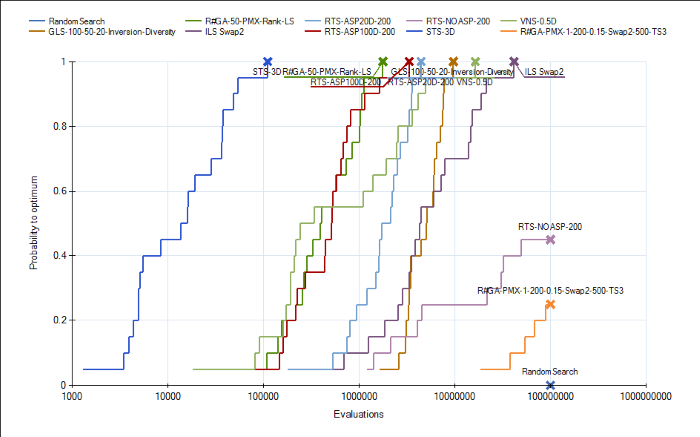

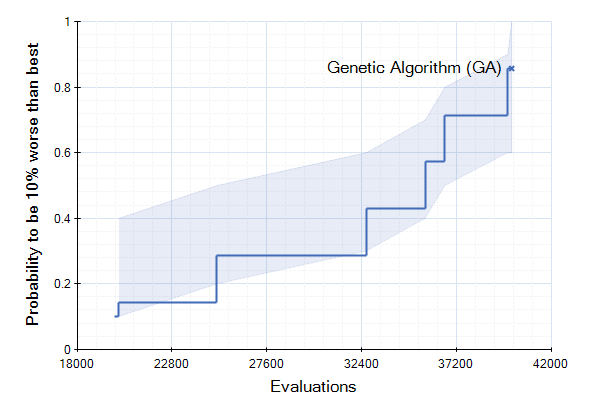

Run-Length Distribution Analysis with HeuristicLab

We have included in our trunk today new analyzers for measuring algorithm performance. The analyzers are called QualityPerEvaluationsAnalyzer and QualityPerClockAnalyzer. They record the convergence graph in each run and add it as a result. A new run analyzer called "Run-length Distribution View" can be used to build, so called, ECDFs out of the convergence graphs in the individual runs using a single or multiple targets. This allows plotting the cumulative success probability of reaching a certain fitness value given a certain effort (measured in evaluated solutions or elapsed wall-clock time). These plots can be used to compare the run-length distributions of algorithm instances applied to one or many problem instances.

The run-length distribution view can cope with runs of different length such that it would not simple plot the number of hits divided by the total number of runs in the experiment. Instead, the denominator is decreased whenever a run missed the target and terminated prematurly. Thus one can use shorter runs to obtain more accurate ECDFs for lower amounts of function evaluations. In order to visualize missed runs, we plot a shaded area for every curve that represents the min and max bounds for the ECDF. The minimum level is pessimistic and assumes that all prematurely missed runs would have not hit the target later on. The upper level is optimistic and assumes that all prematurely missed runs would have immediately found the target right afterwards. The line that is based purely on the observed hits is thus in-between these two.

Together with the other run analyzers this one provides another means for algorithm developers to test and compare their instances. Currently, this feature is part of the main development trunk.

rss

rss