Implementing LAHC in HeuristicLab

Late Acceptance Hill-Climbing (LAHC) is a relatively recent single-solution metaheuristic, see Burke and Bykov's article:

It has a single parameter that controls the chance to make a deteroriating move. The higher this parameter the greater the chance for exploration and thus of reaching a higher quality solution, however the more time is required in convergence. LAHC is not available in HeuristicLab as a standard algorithm yet. But HeuristicLab makes it very easy to implement new algorithms through scripting and in doing so we can reuse the problem instances that we include as well as the infrastructure for visualizing solutions and analyzing runs. The following is a C# script-based implementation of LAHC that optimizes solutions to the berlin52 TSP problem.

If you copy and paste the following code to a new C# script you can run the algorithm. In this code you also see how you can create run objects from scripts. The run contains the solution, algorithm and problem description and convergence graphs among other data. If you put this and other run objects in a RunCollection you can easily do a performance analysis. Hint: You must obtain the latest stable binaries from our download page to run this code.

using System;

using System.Collections.Generic;

using System.Diagnostics;

using System.Linq;

using HeuristicLab.Analysis;

using HeuristicLab.Common;

using HeuristicLab.Core;

using HeuristicLab.Data;

using HeuristicLab.Encodings.PermutationEncoding;

using HeuristicLab.Problems.Instances;

using HeuristicLab.Problems.Instances.TSPLIB;

using HeuristicLab.Problems.TravelingSalesman;

using HeuristicLab.Optimization;

public class LAHCScript : HeuristicLab.Scripting.CSharpScriptBase {

public override void Main() {

var random = new HeuristicLab.Random.MersenneTwister();

var tsplib = new TSPLIBTSPInstanceProvider();

var instance = tsplib.GetDataDescriptors()

.Single(x => x.Name == "berlin52");

var tsp = tsplib.LoadData(instance);

var dm = new DistanceMatrix(tsp.GetDistanceMatrix());

Permutation best = null, current = null, candidate = null;

var candidateFitness = double.MaxValue;

var bestFitness = double.MaxValue;

var currentFitness = double.MaxValue;

IsBetter isBetter = (first, second, orEqual) => (first < second)

|| (orEqual && first == second);

InitializeFitness init = () => {

candidate = new Permutation(

PermutationTypes.RelativeUndirected, tsp.Dimension, random);

current = candidate;

best = candidate;

candidateFitness = TourLength(candidate, dm);

currentFitness = candidateFitness;

bestFitness = candidateFitness;

return candidateFitness;

};

NextFitness next = () => {

candidate = new Permutation(

PermutationTypes.RelativeUndirected, current);

var move = StochasticInversionSingleMoveGenerator

.Apply(candidate, random);

candidateFitness = currentFitness + TSPInversionMovePathEvaluator

.EvaluateByDistanceMatrix(candidate, move, dm);

// important to perform the move after the delta calculation

InversionManipulator.Apply(candidate, move.Index1, move.Index2);

return candidateFitness;

};

Accept accept = () => {

current = candidate;

currentFitness = candidateFitness;

if (isBetter(candidateFitness, bestFitness, orEqual: false)) {

best = candidate;

bestFitness = candidateFitness;

}

};

var run = LAHC(init, next, accept, isBetter, 5000);

run.Parameters.Add("Problem Name",

new StringValue(instance.Name));

run.Parameters.Add("Problem Type",

new StringValue(typeof(TravelingSalesmanProblem).Name));

run.Parameters.Add("Maximization", new BoolValue(false));

if (tsp.BestKnownQuality.HasValue)

run.Parameters.Add("BestKnownQuality",

new DoubleValue(tsp.BestKnownQuality.Value));

var solution = new PathTSPTour(new DoubleMatrix(tsp.Coordinates),

best, new DoubleValue(bestFitness));

run.Results.Add("Best Solution", solution);

HeuristicLab.MainForm.MainFormManager.MainForm.ShowContent(run);

}

private static double TourLength(Permutation perm, DistanceMatrix dm) {

var length = dm[perm.Last(), perm.First()];

for (var i = 1; i < perm.Length; i++) {

length += dm[perm[i - 1], perm[i]];

}

return length;

}

public delegate double InitializeFitness();

public delegate double NextFitness();

public delegate void Accept();

public delegate bool IsBetter(double first, double second, bool orEqual);

public static Run LAHC(InitializeFitness init, NextFitness next,

Accept accept, IsBetter isBetter, int listSize,

int minTries = 100000) {

var sw = Stopwatch.StartNew();

var cgraph_fe = new IndexedDataTable<double>("Convergence Graph FE");

var cgraph_wc = new IndexedDataTable<double>("Convergence Graph Time");

var crow_fe = new IndexedDataRow<double>("First-hit");

var crow_wc = new IndexedDataRow<double>("First-hit");

cgraph_fe.Rows.Add(crow_fe);

cgraph_fe.VisualProperties.XAxisLogScale = true;

cgraph_wc.Rows.Add(crow_wc);

cgraph_wc.VisualProperties.XAxisLogScale = true;

var bestFit = init();

var globalIter = 0;

var memory = new double[listSize];

for (var v = 0; v < listSize; v++) {

memory[v] = bestFit;

}

Tuple<int, double> last = null;

foreach (var s in

LAHC(next, accept, isBetter, bestFit, memory, minTries)) {

if (isBetter(s.Item2, bestFit, orEqual: false)) {

bestFit = s.Item2;

crow_fe.Values.Add(

Tuple.Create((double)globalIter + s.Item1, s.Item2));

crow_wc.Values.Add(

Tuple.Create(sw.ElapsedTicks / (double)Stopwatch.Frequency,

s.Item2));

}

last = s;

}

globalIter += last.Item1;

sw.Stop();

crow_fe.Values.Add(Tuple.Create((double)globalIter, bestFit));

crow_wc.Values.Add(

Tuple.Create(sw.ElapsedTicks / (double)Stopwatch.Frequency,

bestFit));

var run = new Run() { Name = "LAHC-" + listSize };

run.Parameters.Add("Algorithm Name", new StringValue("LAHC"));

run.Parameters.Add("ListSize", new IntValue(listSize));

run.Parameters.Add("MinTries", new IntValue(minTries));

run.Parameters.Add("Algorithm Type", new StringValue("LAHC"));

run.Results.Add("ExecutionTime", new TimeSpanValue(

TimeSpan.FromSeconds(sw.ElapsedTicks

/ (double)Stopwatch.Frequency)));

run.Results.Add("EvaluatedSolutions", new IntValue(globalIter));

run.Results.Add("BestQuality", new DoubleValue(bestFit));

run.Results.Add("QualityPerEvaluations", cgraph_fe);

run.Results.Add("QualityPerClock", cgraph_wc);

return run;

}

private static IEnumerable<Tuple<int, double>> LAHC(

NextFitness nextFitness, Accept doAccept,

IsBetter isBetter, double fit, double[] memory,

int minTries) {

var bestFit = fit;

var tries = 0;

var lastSuccess = 0;

var l = memory.Length;

while (tries < minTries || (tries - lastSuccess) < tries * 0.02) {

var nextFit = nextFitness();

var v = tries % l;

tries++;

var prevFit = memory[v];

var accept = isBetter(nextFit, fit, orEqual: true)

|| isBetter(nextFit, prevFit, orEqual: true);

if (accept && isBetter(nextFit, fit, orEqual: false))

lastSuccess = tries;

if (accept) {

if (isBetter(nextFit, bestFit, orEqual: false)) {

bestFit = nextFit;

yield return Tuple.Create(tries, bestFit);

}

fit = nextFit;

doAccept();

}

if (isBetter(fit, prevFit, orEqual: false))

memory[v] = fit;

}

yield return Tuple.Create(tries, bestFit);

}

}

Grammatical Evolution in HeuristicLab

The latest version of HeuristicLab now also includes a plugin for grammatical evolution (GE)! The plugin has been implemented by Sabine Winkler for her Bachelor's thesis, and she kindly contributed her implementation to HeuristicLab, so that we could integrate it into the stable development branch.

So far, the implementation supports two problems: symbolic regression and the artifical ant problem for the Santa Fe trail. However, additional problem types for grammatical evolution can be added easily in a similar way as for tree-based GP (see our documentation on implementing GP problems)

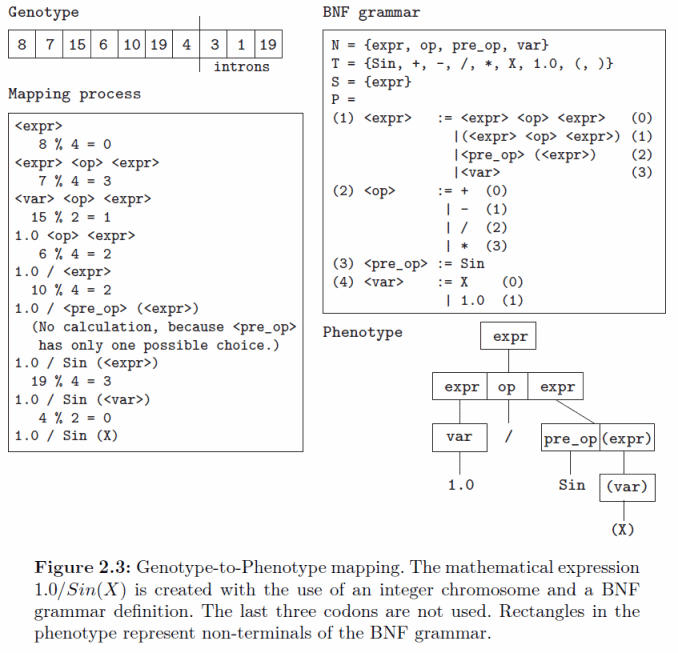

The implementation of GE reuses existing classes and methods for tree-based GP and acts mainly as a translator between the integer vector encoding and the tree encoding. In the mapping process the grammar is used to build a tree from the integer vector. The concept of the mapping process is shown in the following diagram:

The GE plugin also provides different multiple variants of mappers that have been described in literature including depth-first, breath-first, and the π-GE mappers. In GE the genotype to phenotype mappers use a grammar to create a tree from an integer vector. The elements of the integer vector are read from left to right and the tree is built starting from the root symbol of the grammar. For each non-terminal symbol the mapper reads one element from the vector and chooses the alternative for extending the tree based on this value. An example for the depth-first mapper is shown in the following figure.

In GE the genotype to phenotype mapping is prone to the so-called ripple-effect which describes the phenomenon that a small change of the integer-vector might lead to a completely different tree after mapping. This hampers the search process and could lead to premature convergence of the algorithm.

We are happy about contributions to the grammatical evolution implementation or to HeuristicLab in general. So, if you have implemented an algorithm or problem or even only a custom operator feel free to contribute!

Figures (C) Sabine Winkler, with kind permission.

CMA-ES Implementation in HeuristicLab

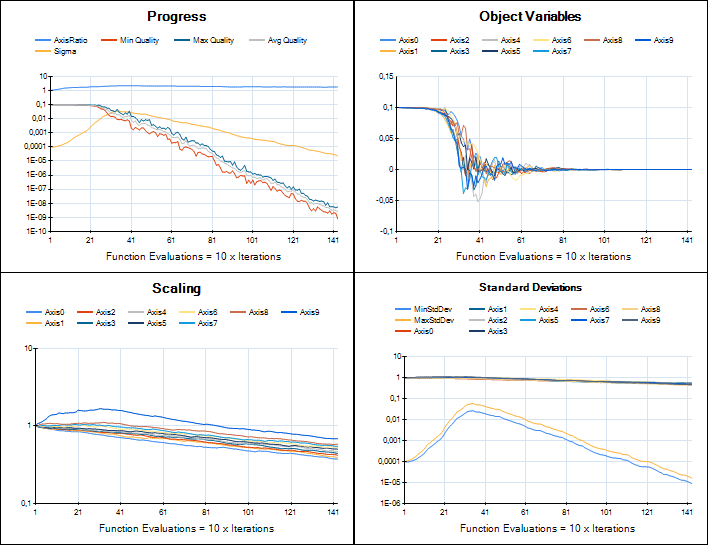

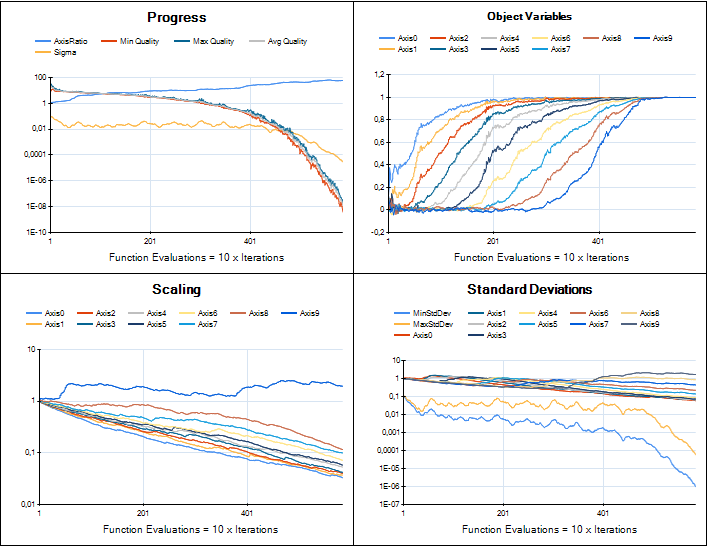

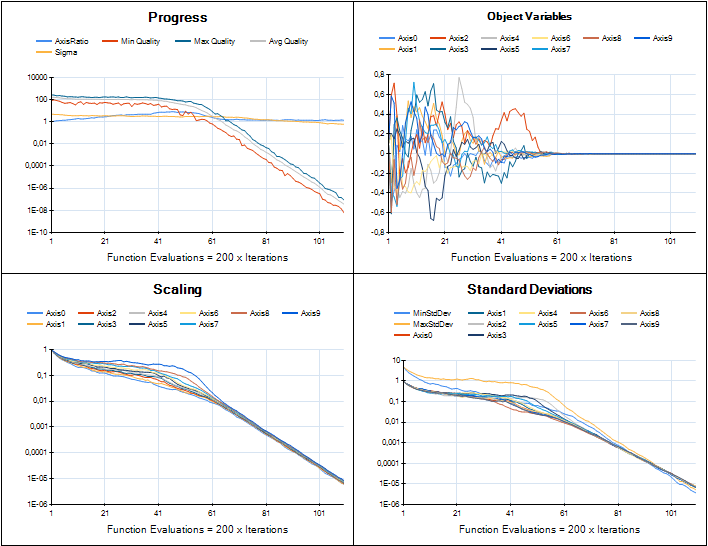

We are working on providing an implementation of CMA-ES in HeuristicLab for real-valued optimization. The developments are tracked in ticket #1961 and the implementation is based on Hansen's Java implementation. Porting that code to C# is now almost complete and the integration into HeuristicLab looks very nice. Although most of the original code parts could be preserved the integration required to break some parts apart and reassemble them in a different way. I was very pleased to see Hansen providing "trusted output" image samples for implementers to check if their version performed similar. Here is a comparison of HeuristicLab's implementation (left) with the trusted output images (right).

Sphere

Rosenbrock

Rastrigin

The plugin is still not part of the trunk, but available in a branch which can be compiled by anyone. I attach a current binary version of the plugin to this post if you want to try it (add it to the latest daily build, unblock the dll after you download it). CMA-ES currently can be used to solve any problem that is based on the Encodings.RealVector plugin and uses an IRealVectorCreator as solution creator. Of course the implementation may still change and may break files.

rss

rss